Bridging Input Feature Spaces Towards Graph Foundation Models

Authored by Moshe Eliasof, Krishna Sri Ipsit Mantri, Beatrice Bevilaca, Bruno Ribeiro, Carola-Bibiane Schönlieb

Published in The Fourteenth International Conference on Learning Representations (ICLR) 2026

Abstract

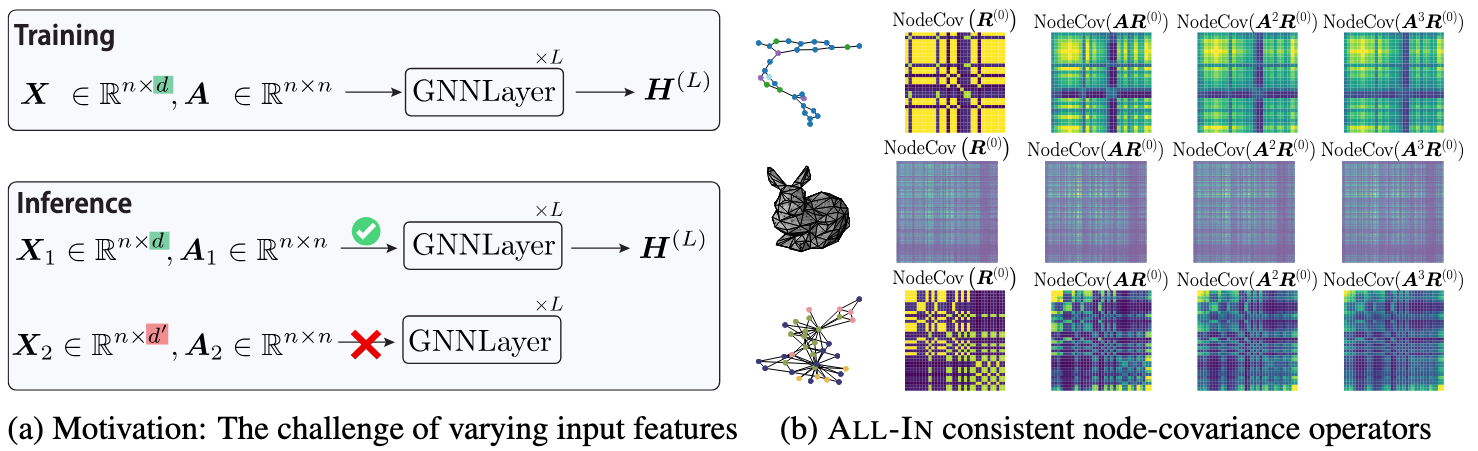

Unlike vision and language domains, graph learning lacks a shared input space, as input features differ across graph datasets not only in semantics, but also in value ranges and dimensionality. This misalignment prevents graph models from generalizing across datasets, limiting their use as foundation models. In this work, we propose ALL-IN, a simple and theoretically grounded method that enables transferability across datasets with different input features. Our approach projects node features into a shared random space and constructs representations via covariance-based statistics, thus eliminating dependence on the original feature space. We show that the computed node-covariance operators and the resulting node representations are invariant in distribution to permutations of the input features. We further demonstrate that the expected operator exhibits invariance to general orthogonal transformations of the input features. Empirically, ALL-IN achieves strong performance across diverse node- and graph-level tasks on unseen datasets with new input features, without requiring architecture changes or retraining. These results point to a promising direction for input-agnostic, transferable graph models.

Resources

Work done when Ipsit was doing his M.Sc. at Purdue University, with Prof. Bruno Ribeiro.

Bibtex

@inproceedings{ eliasof2026bridging,

author = { Moshe Eliasof and Krishna Sri Ipsit Mantri and Beatrice Bevilaca and Bruno Ribeiro and Carola-Bibiane Schönlieb },

title = { Bridging Input Feature Spaces Towards Graph Foundation Models },

booktitle = { The Fourteenth International Conference on Learning Representations (ICLR) },

year = { 2026 },

}