Towards Improved Sentence Representations using Token Graphs

Authored by Krishna Sri Ipsit Mantri, Carola-Bibiane Schönlieb, Zorah Lähner, Moshe Eliasof

Published in The Fourteenth International Conference on Learning Representations (ICLR) 2026

Abstract

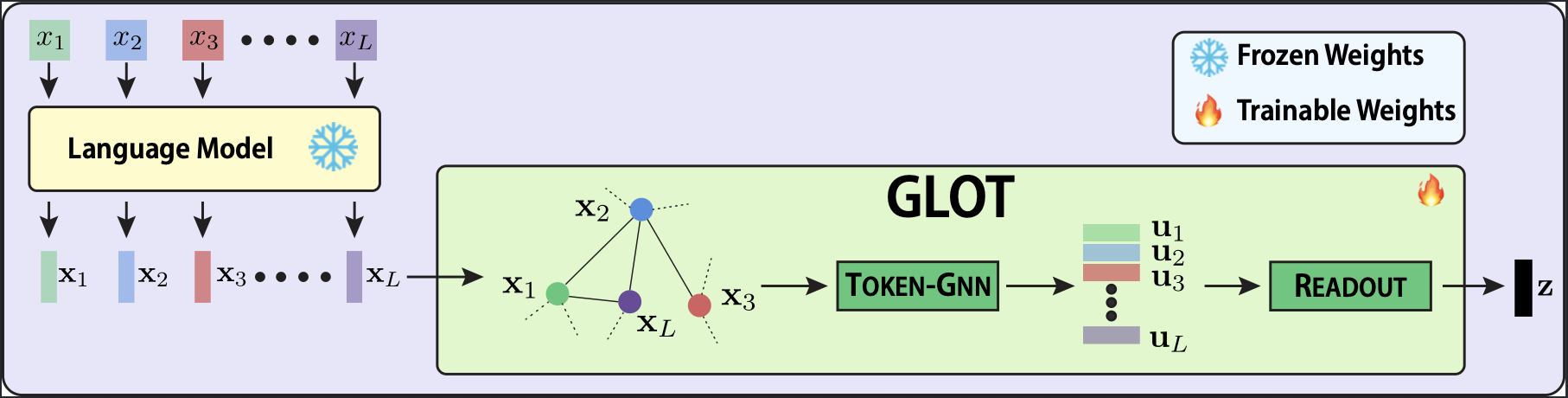

Obtaining a single-vector representation from a Large Language Model's (LLM) token-level outputs is a critical step for nearly all sentence-level tasks. However, standard pooling methods like mean or max aggregation treat tokens as an independent set, discarding the rich relational structure captured by the model's self-attention layers and making them susceptible to signal dilution. To address this, we introduce GLOT, a lightweight, structure-aware pooling module that reframes pooling as relational learning followed by aggregation. Operating on the outputs of a frozen LLM, GLOT first constructs a latent token-similarity graph, then refines token representations with a graph neural network, and finally aggregates them using a readout layer. Experimentally, our approach is remarkably robust and efficient: on a diagnostic stress test where 90% of tokens are random distractors, GLOT maintains over 97% accuracy while baseline methods collapse. Furthermore, it is competitive with state-of-the-art techniques on benchmarks like GLUE and MTEB with 20x fewer trainable parameters and speeds up the training time by over 100x compared with parameter-efficient fine-tuning methods. Supported by a theoretical analysis of its expressive power, our work shows that learning over token graphs is a powerful paradigm for the efficient adaptation of frozen LLMs. Our code is published at https://github.com/ipsitmantri/GLOT.

Resources

Bibtex

@inproceedings{ mantri2026glot,

author = { Krishna Sri Ipsit Mantri and Carola-Bibiane Schönlieb and Zorah Lähner and Moshe Eliasof },

title = { Towards Improved Sentence Representations using Token Graphs },

booktitle = { The Fourteenth International Conference on Learning Representations (ICLR) },

year = { 2026 },

}